I have been using Ubuntu as my desktop OS for over 15 years now. I came to it by way of using it on a backup computer to do a task that my primary computer, using Windows XP, failed at if I tried to do anything else concurrently. I didn’t want to buy another computer or a license for XP and put it on an old computer with specs that were very marginal for XP. So I thought, try this free OS and see whether I can use the old computer to do the task that my new XP computer would only do if nothing else was done at the same time.

Ubuntu got the job done. I recorded my vinyl albums to sound files, broke the sound files into tracks like on the albums, and then burned the tracks onto CDs so I could carry my music around more conveniently and listen to it in more places. XP did this too, but the sound files were corrupt or the burn failed if I did something else like open my word processor, spreadsheet, or web browser while recording or burning were going on.

Now I had two pcs running and would switch between them as needed to keep the vinyl to CD process going. That was a little inconvenient because my workspace didn’t let me put the two pcs right next to each other. Switching wasn’t a case of moving my hands from one keyboard and mouse to another, or flipping a switch on a KVM. Delaying getting to the Ubuntu pc after the album side was over meant extra time spent trimming the audio file to delete the tail of the file. This led to me trying some things on the Ubuntu pc like opening the word processor or spreadsheet or browsing the web while the recording or burning were going on to see if it caused problems. And amazing, it didn’t! A lower spec pc with Ubuntu could do more of what I wanted without errors than my much better XP desktop.

That led me to using the Ubuntu pc while converting my albums to CD and that led me to Ubuntu for home use. Professional life continued and continues to be Windows, but at home Ubuntu. And Ubuntu is still preferred at home because it doesn’t mysteriously prevent me from doing things, inconveniently interrupt me, or insist on having information I don’t want to share like Windows does. That is until ZFS in Ubuntu 20.04 started preventing me from doing updates on my primary and backup pc because of lack of space.

I’ve run out of space on Windows and Ubuntu before. It just meant time to finally do some housekeeping and get rid of large chunks of files, like virtual machines, that I hadn’t used in a while. Do that and boom, back to work! Not so with ZFS. Do that and gloom, still can’t do updates.

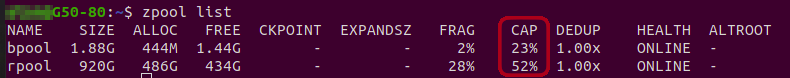

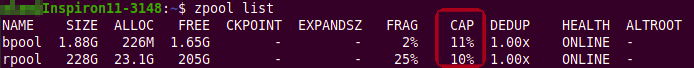

There were different error messages on the two pcs, one said bpool space free below 20%, the other was rpool space free below 20%. Rpool and bpool, what are they? And why, when there’s nearly 20% free space, is updating prevented? And why after deleting or moving tens of gigabytes of files off the drive and purging old kernels, a Linux thing, are updates still prevented and rpool and bpool still report less than 20% free? Gigs of files were just moved off the drive and these rpool and bpool things don’t reflect that!

My first experience with Ubuntu after more than 15 years using it where keeping it up to date wasn’t just a case of using it and running updates every once in a while.

Windows has a feature called “Recovery Points” that I’ve used to get back to a working system when things have been broken to the point of making it hard or impossible to use the pc. Ubuntu hasn’t really had anything equivalent until the introduction of ZFS. And as I’ve learned, that’s way too simple minded an explanation and doesn’t give credit to the capabilities of ZFS that go way beyond Windows Recovery Points. True, and so be it.

I dug through many ZFS web pages and tried many things until finally getting more than 20% rpool or bpool free on each pc, a list of links is at the end of this post. Now the pcs are back to updating without complaint.

What I’ve learned is Ubuntu has a way to go to make ZFS user friendly. Things I’d suggest to Canonical for desktop Ubuntu:

- Double the recommended minimum drive size and/or tell end users they should have 2x the drive space they think they need if they already think they need more than the minimum

- Reduce the default number of snapshots to 10

- Provide a UI for setting the number of snapshots

- Provide a UI for selectively removing snapshots from bpool or rpool when free space goes below the dreaded 20%

- After prompting for confirmation automatically remove the oldest snapshots to get back to 20% free when the condition occurs

Both my pcs are now above 20% free space on rpool and bpool and updating without complaint. It took a while and some learning to make that happen. It wasn’t the type of thing an average end user would ever want to face or even know about.

ZFS focus on Ubuntu 20.04 LTS: ZSys general presentation · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys general principle on state management · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys commands for state management · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys state collection · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys for system administrators · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys partition layout · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys dataset layout · ~DidRocks

ZFS focus on Ubuntu 20.04 LTS: ZSys properties on ZFS datasets · ~DidRocks

apt – Out of space on boot zpool and cant run updates anymore – Ask Ubuntu

For this link see especially Hannu‘s answer on Nov 19, 2020 at 17:22

docs.oracle.com | Displaying and Accessing ZFS Snapshots

docs.oracle.com | Destroying a ZFS File System

docs.oracle.com | Creating and Destroying ZFS Snapshots